I am a PhD student at the Computer Vision Group at Technical University of Munich (TUM), advised by Prof. Daniel Cremers. My research focuses on generative models for 3D motion understanding from egocentric videos, with the goal of enabling embodied agents to perceive and reason about object dynamics in real-world environments. I work on trajectory generation, flow matching, and physics-aware inference for egocentric scene understanding.

Previously, I worked on neural scene stylization using 3D Gaussian Splatting. I am broadly interested in problems at the intersection of generative modeling, 3D vision, and embodied perception.

Research Interests

Egocentric Vision

Generative Models

Flow Matching

3D Scene Understanding

Trajectory Generation

Embodied Perception

Neural Scene Stylization

Gaussian Splatting

News

March 2026 Our paper EgoFlow: Gradient-Guided Flow Matching for Egocentric 6DoF Object Motion Generation was accepted to CVPR 2026

January 2026 Our paper GMT: Goal-Conditioned Multimodal Transformer for 6-DOF Object Trajectory Synthesis in 3D Scenes was accepted to 3DV 2026

August 2024 Our paper Gaussian Splatting in Style was accepted to GCPR 2024

Selected Publications

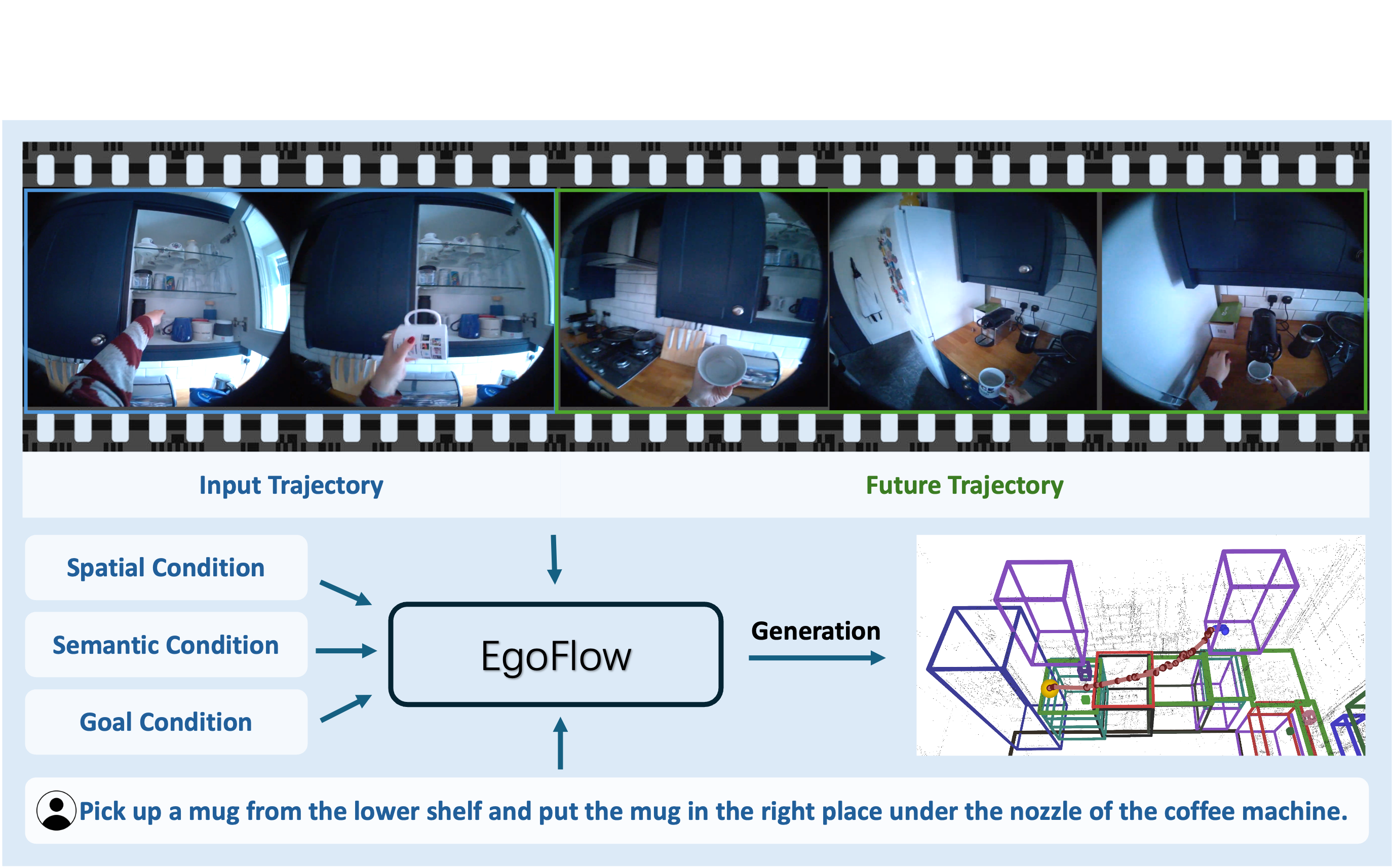

EgoFlow: Gradient-Guided Flow Matching for Egocentric 6DoF Object Motion Generation

CVPR 2026

A flow-matching framework with gradient-guided inference that generates physically plausible 6DoF object trajectories from egocentric videos, reducing collision rates by up to 79%.

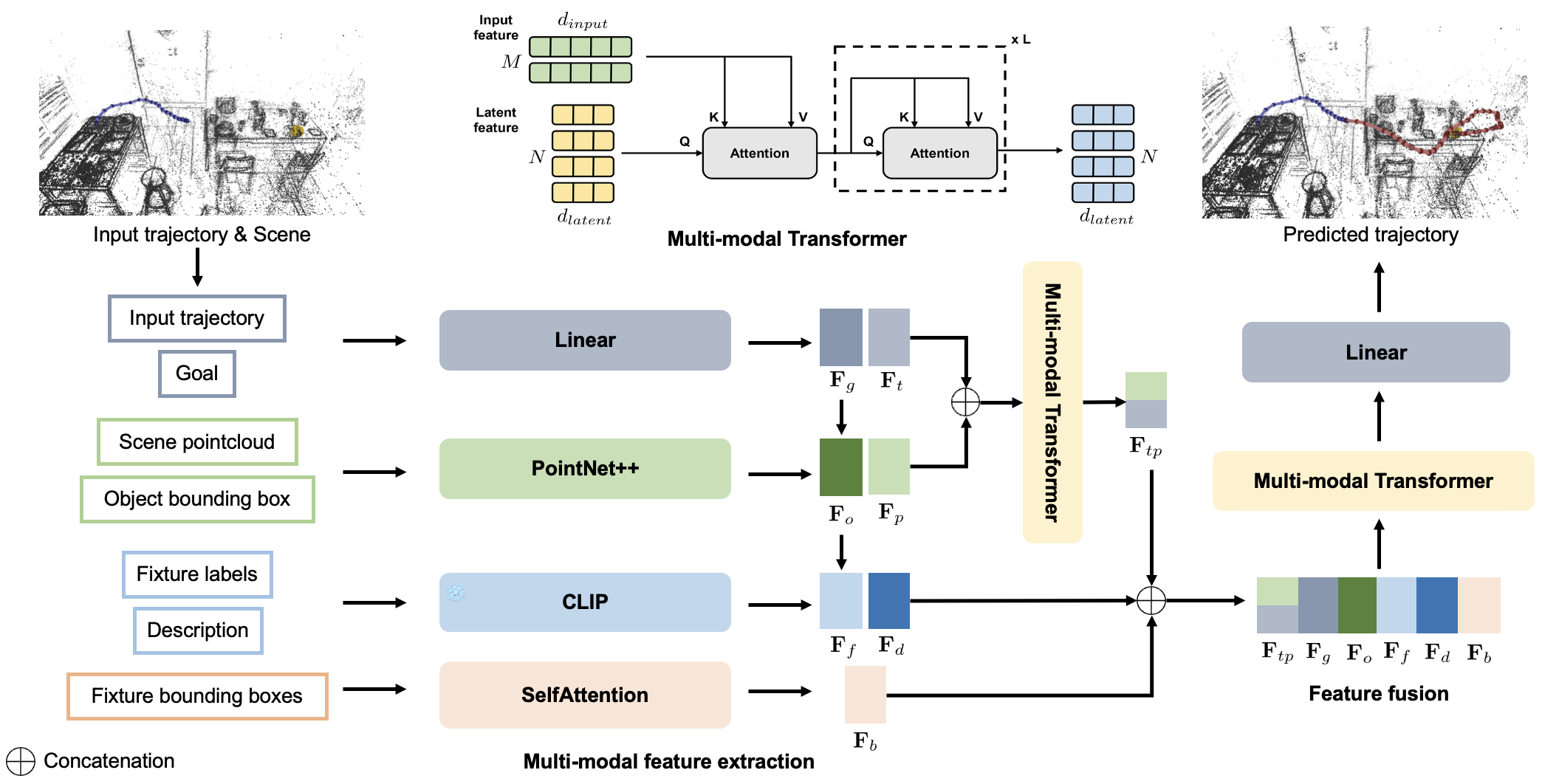

GMT: Goal-Conditioned Multimodal Transformer for 6-DOF Object Trajectory Synthesis in 3D Scenes

3DV 2026

A multimodal transformer that generates goal-directed 6-DOF object trajectories by jointly leveraging 3D geometry, point cloud context, and semantic cues.

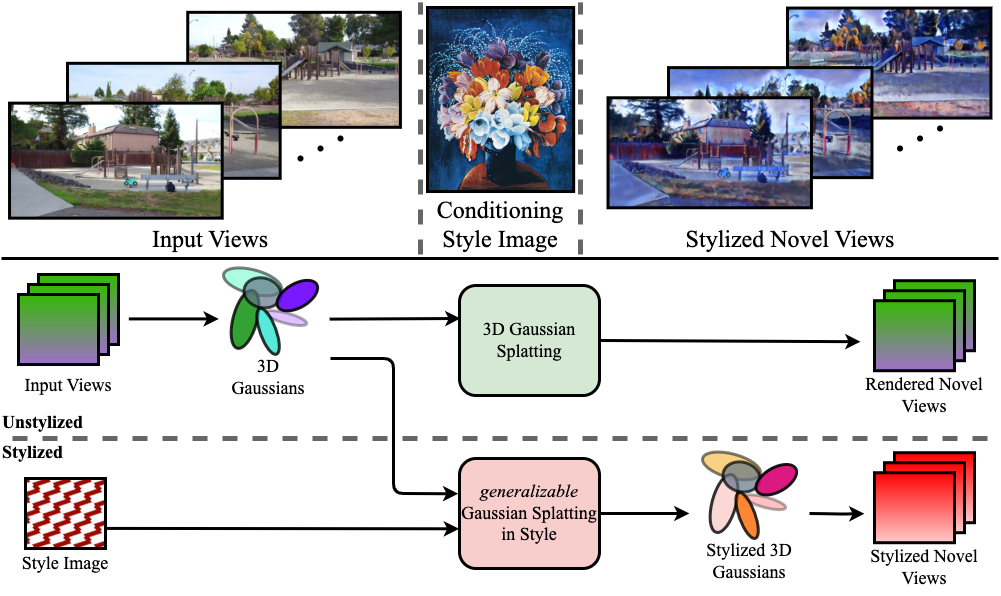

Gaussian Splatting in Style

GCPR 2024

First to employ Gaussian Splatting for scene stylization, extending neural style transfer to three spatial dimensions.